747s and Coding Agents

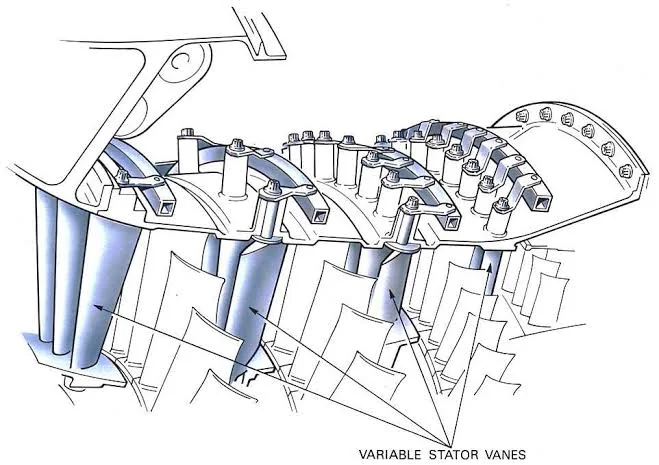

A couple years ago, I was on the way back from a work trip to Germany. I had been upgraded to business class, and I sat next to a Belgian 747 pilot, probably in his fifties or sixties. We talked a fair bit about our careers. I had left the Navy and started professionally programming less than a year before. He had been a pilot since shortly after graduating university, and had flown the 747 for about twenty years. He had studied mechanical engineering at school, and he told me in great depth about the variable geometry jet turbines in modern aircraft, which could remain efficient across a wide altitude range.

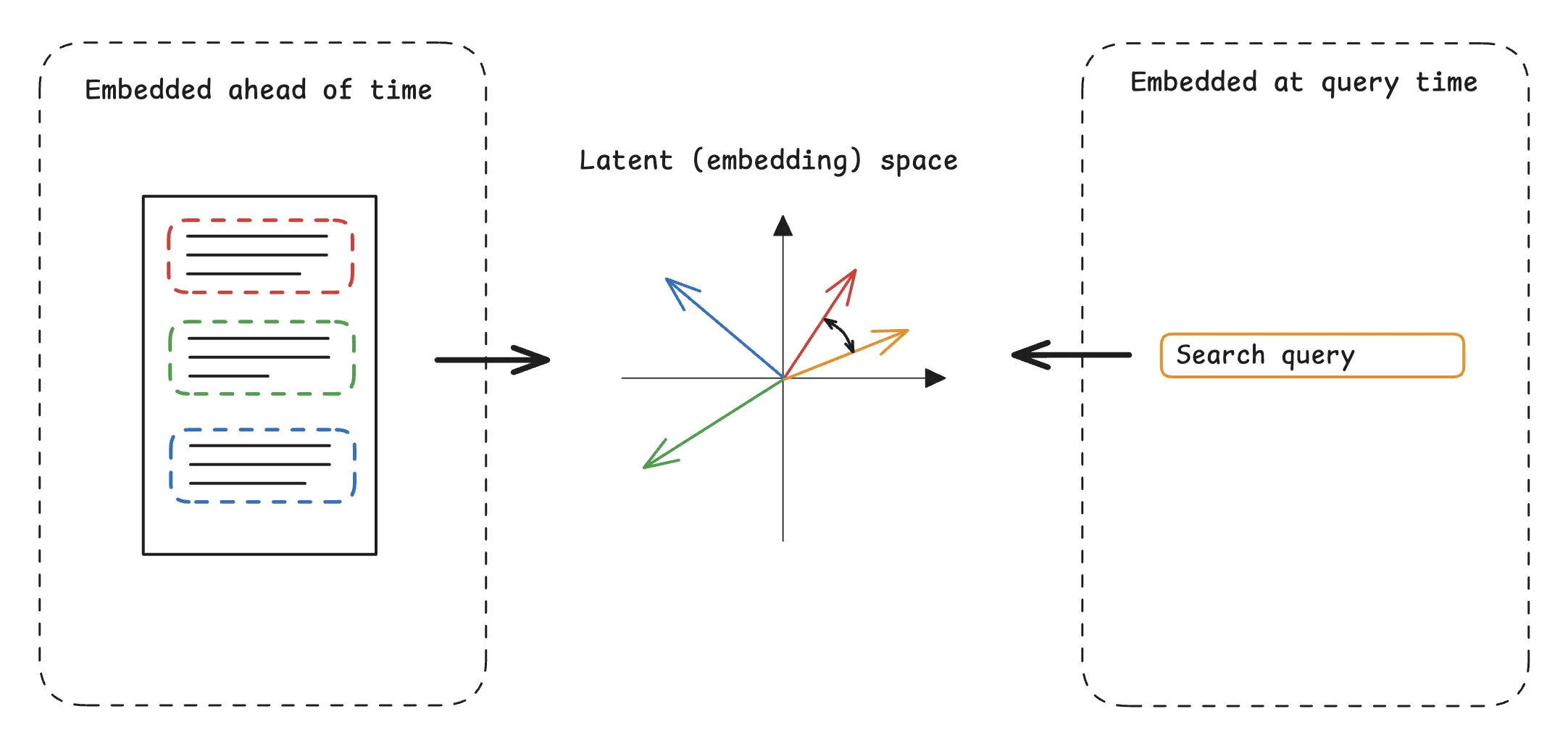

Tuning Semantic Search on JFMM.net

During my last couple years in the Navy, I became intimately familiar with submarine quality assurance (QA). I went to QA school and became the QA officer (QAO) of PCU New Jersey. As part of my responsibilities, I had to review work packages and qualify sailors as proficient in quality maintenance.

RL for Two-Month-Olds

I’ve been spending the last month studying reinforcement learning. There are lots of great resources out there, and I’ve been focusing on Sutton and Barto and Spinning Up on Deep RL from OpenAI. Before this, I was only really familiar with supervised and unsupervised learning. I knew that reinforcement learning meant punishing or rewarding the computer, but that was basically it.

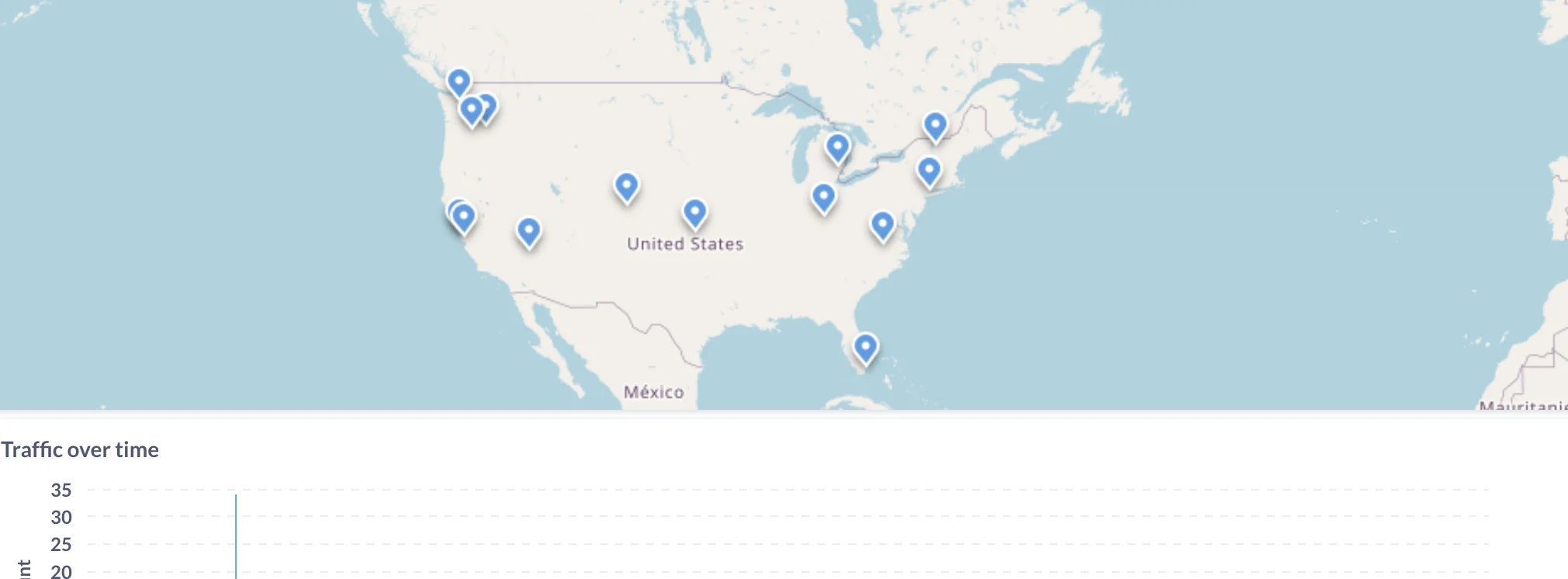

Rolling my Own Analytics

To the extent you can, avoid letting intermediaries come between you and your audience. In some types of work this is inevitable, but it’s so liberating to escape it that you might be better off switching to an adjacent type if that will let you go direct. — Paul Graham, How to Do Great Work

Integrating Random Functions on a Cluster with Temporal

In 2020, I read Lample and Charton’s Deep Learning for Symbolic Mathematics. I had graduated with a math degree less than two years before, and I thought it would be cool to apply neural networks to math. One candidate was the search for Lyapunov functions, which were crucial to my undergraduate research. Finding Lyapunov functions is like finding integrals. The two problems share a tantalizing property: solutions are easy to verify, but hard to compute. I tried to reproduce some of Lample and Charton’s work on my own, but I wasn’t a great programmer. I was also distracted with my day job—I spent 260 days at sea in 2020.

A few weeks ago I decided to give it another shot. I’ve changed a lot since 2020, and now programming is my day job. I experienced success this time, but I chose to write here about the parts I found hard, and what surprised me about this project.